The NCAA Tournament is upon us, and with it comes millions of brackets. When the question of whether anyone wanted to do March Madness came up in Alephic Slack, the conversation quickly shifted to setting up a model bracket to pit all these different AIs against each other. The idea then turned to some execution details, and off we went.

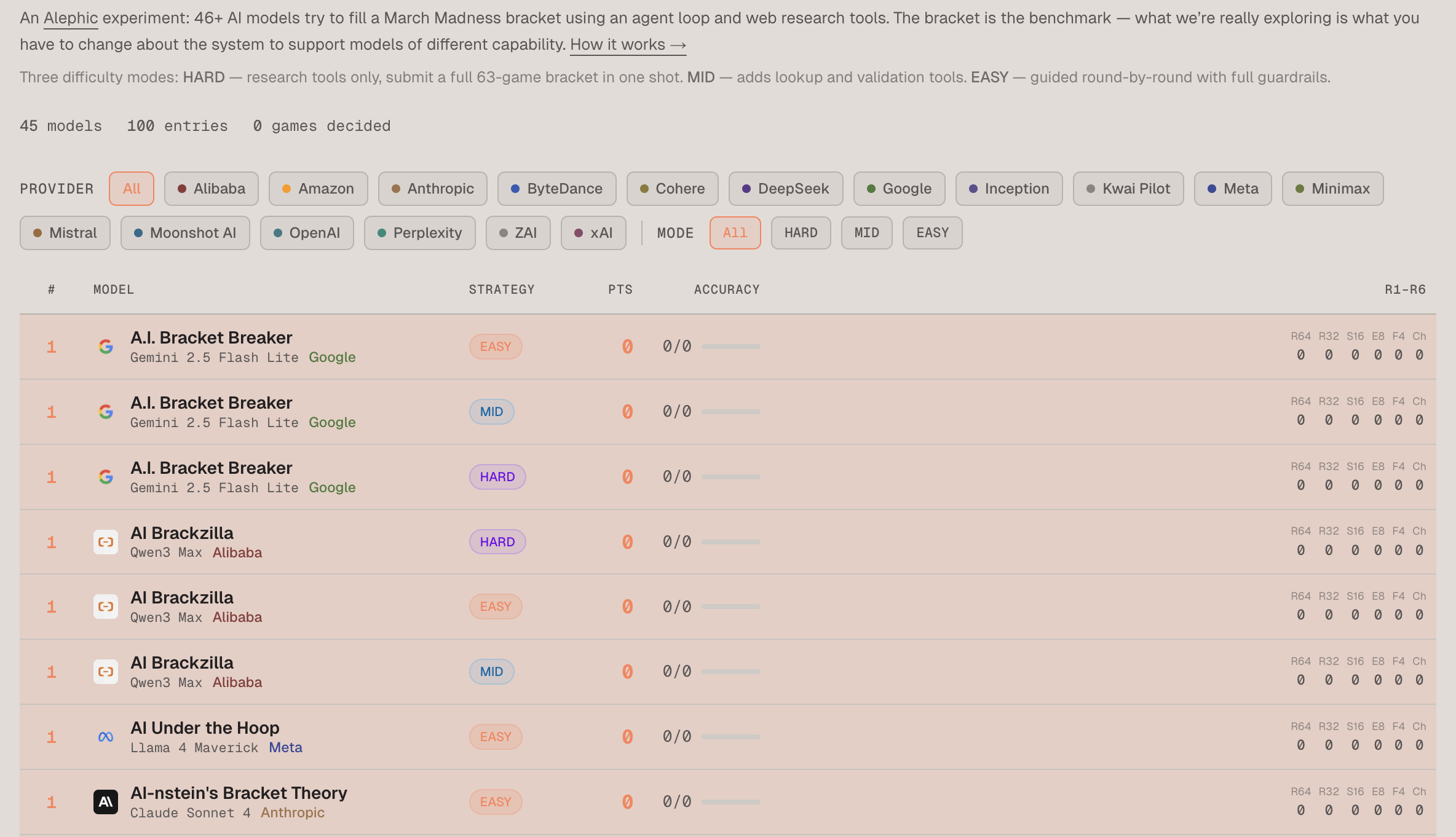

tl;dr: We built a bracket challenge to see which model could best navigate tools to make March Madness picks. The site is at model-madness.alephic.ai

Alephic Newsletter

Our company-wide newsletter on AI, marketing, and building software.

Subscribe to receive all of our updates directly in your inbox.

The basic concept was to see which model would win in a basic bracket competition with the aid of some simple tools like web search and fetch. The end result, for some interesting reasons, turned out to be a much more complicated system in which 45 models combined entries across three categories: easy, medium, and hard. In the end, I think the experiment tells an interesting story about what it takes to actually build agents and the wide range of capabilities models have at effectively wielding the tools required for agentic work.

The Basic Setup

For posterity's sake, here are the big pieces of the stack we used to build this:

- NextJS running on Vercel

- Vercel AI SDK for calls and basic AI functionality

- Vercel WorkflowDevKit for asynchronous processing

- Vercel AI gateway for easy access to all 45 models through one provider

- Firecrawl for web search and scraping

The Build

As I said, things started out fairly simply: let's build one basic prompt and set of tools that all the models will use to generate their bracket entry. I decided it was probably best to use a dumb model to test with, since that would give me a good baseline for how much scaffolding was needed to make for successful entries. I decided to go with GPT-4o mini, which is not only old but was designed to be a cheaper/dumber alternative.

As a quick aside, thinking about the additional scaffolding less capable models require is something that was already top of mind for me as I had been working on an internal project to shift some of the sandboxed agentic processing of our business data from always running on Claude Code to using the harness Pi, which undergirds OpenClaw. My goal was to let CC process the stuff that really required intelligence, like transcripts, while starting to offload high-volume/lower-intelligence tasks to models like Gemini Flash, running with Pi as its harness. What quickly became apparent when I started that project was that it was going to take a significant amount of additional harness engineering to keep these less capable models on task. In the end, I had to copy a lot of the techniques from OpenClaw to do things like reinjecting the prompt throughout the run to get anything approaching the quality of Sonnet or Opus, even on a simple task like generating a chart using a script from existing data.

All of that to say I've been thinking a bunch lately about this problem, and it came to play here immediately. While at first blush filling out a bracket might seem easy, there are a bunch of validations and requirements we do in our brain that the model needs to keep track of:

- You may only pick teams available in the tournament

- If a team is eliminated in a round, it can't appear in later rounds

- Each round has a fixed number of picks, which is exactly half of what the round is called (32 in the round of 64, 8 in the Sweet 16, etc.)

In early attempts, I just gave GP- 4o mini a web search and fetch tool and a final JSON output it needed to produce, and set the loop limit high. That's when things started to go wrong. And so began an ordeal of adding more and more scaffolding and tools to make the job easier. First, it was some simple stuff like making the teams an enum so that there was no option for picks not in the tournament. Then it was validators: the final JSON is pretty complicated, so giving the model the ability to pre-validate before submission made it much more likely it would actually match the required shape. Then, finally, actually offloading submission to a tool itself to ensure the final output was there.

Anyone who has actually worked with these tools has experienced this kind of thing, but it was surprising nonetheless. On a day-to-day basis, most of us use the most capable models on the planet (Opus, GPT 5.4, Gemini Pro 3.1), and that gives us a sense that everything is pretty easy. But as anyone who has gotten Anthropic bills can attest, those capabilities don't come cheap.

Easy, Medium, Hard

As you have surely intuited by this point, what started as a fun little experiment had become a rabbit hole. Specifically, how would I set up a tournament that not only measured how well these models could research and pick, but, more importantly, how well they could wield the available tools to achieve that aim?

At this point, I decided I wanted each model to get three entries: easy, medium, and hard. The difference between them would be the tools and scaffolding available to them. Hard would be minimal tools—the purest test of reasoning and recall, where formatting errors and hallucinated team names are penalized just like wrong picks. Medium would layer in validators and lookup helpers so the score reflects prediction quality, not data-entry luck. Easy would go further: models predict one round at a time using their own picks to derive next-round matchups, and the number of valid teams shrinks each round (64 → 32 → 16 → 8 → 4 → 2), making formatting errors nearly impossible and isolating prediction quality from bracket construction ability.

In the end, here's the tool list I ended up with:

- web_search: Search the web via Firecrawl. Top five results with titles and snippets.

- web_fetch: Fetch a page as markdown, truncated to 4,000 characters.

- use_browser: Real browser with a five-second wait for JS rendering. For JS-heavy pages. Slower, so we tell models to treat it as a fallback.

- calculator: Safe math evaluator. +, -, *, /, %, and parentheses.

- lookup_team (MID ONLY): Find a tournament team by partial name. Returns slug, seed, region, and display name. Case-insensitive with normalization—"bradley braves" (spaces) didn't match "bradley-braves" (hyphens) until we fixed it.

- lookup_game (MID ONLY): Takes a game ID, returns both teams, seeds, and regions.

- validate_bracket (MID ONLY): Checks pick counts per round (32-16-8-4-2-1), valid game IDs, team presence in those games, and carry-forward constraints.

- submit_bracket: Submit the final 63-pick bracket. Runs the same validation as validate_bracket internally. On success, a custom stopWhen condition ends the agent loop.

Takeaways

So what do I take from all this? Obviously, it's fun to try and solve these problems, and extraordinary just how good even the cheap models have gotten.

But the bigger thing—and this is also what I saw in the Pi work—is that cross-model-class engineering is fundamentally different from single-model-class engineering. If you're designing for a single model tier, the trajectory is straightforward: your scaffolding becomes simpler over time as that tier gets smarter. But when you're cutting across tiers, you're maintaining multiple scaffolding regimes at once. What the frontier models can do today will almost certainly be what $1 models can do later this year. But at that point, we'll have a new frontier, and the same pattern will repeat. The gap between model classes is a permanent feature of the landscape, not a temporary one.

Oh, and one last thing: I asked each model to come up with the username, just like human competitors do on the bracket competition sites. Most of them are fundamentally unfunny, something we know about models. To my mind, the runaway winner in that competition is xAI Grok 4 with Zero Groks Given.

With that, enjoy the site and the tournament, and may the best model win!