Gemini Flash has been on my mind a lot lately. Partly because Flash 3 came out and I've been doing a bunch with it (including processing 15 years of Evernote notes over a weekend), and partly because GPT 5.4 dropped, which means we have a new potential leader in the frontier LLM category. That last bit matters because the title of best model in the world feels like a horse race. Depending on the month and day, it could be OpenAI, Anthropic, or Google ahead by a nose. That race matters a lot to my day-to-day, but I'm not convinced swapping one for another would change much. They're all excellent, and they all cost roughly the same.

And then there's Gemini Flash. Neither Anthropic’s Claude Haiku or OpenAI’s GPT-5 Mini can come close to touching the smarts per dollar that Flash offers.

Alephic Newsletter

Our company-wide newsletter on AI, marketing, and building software.

Subscribe to receive all of our updates directly in your inbox.

My favorite Flash story is from about a year ago, when a customer called us needing something in a pinch. They had 2 terabytes of b-roll photo and video that needed to be tagged and organized in a week for an upcoming campaign. They were an existing customer, and we were happy to help (we take seriously our commitment to help solve a CMO's most pressing challenges—no matter what that challenge may be). But before taking it on, I wanted to make sure the cost made sense. So I took a gig of the 2 terabytes, built a taxonomy, and ran it through Gemini Flash 2.5. And then I ran it again. And then a third time—because I kept thinking I'd screwed up the math. The total projected cost in tokens for the whole project: $60.

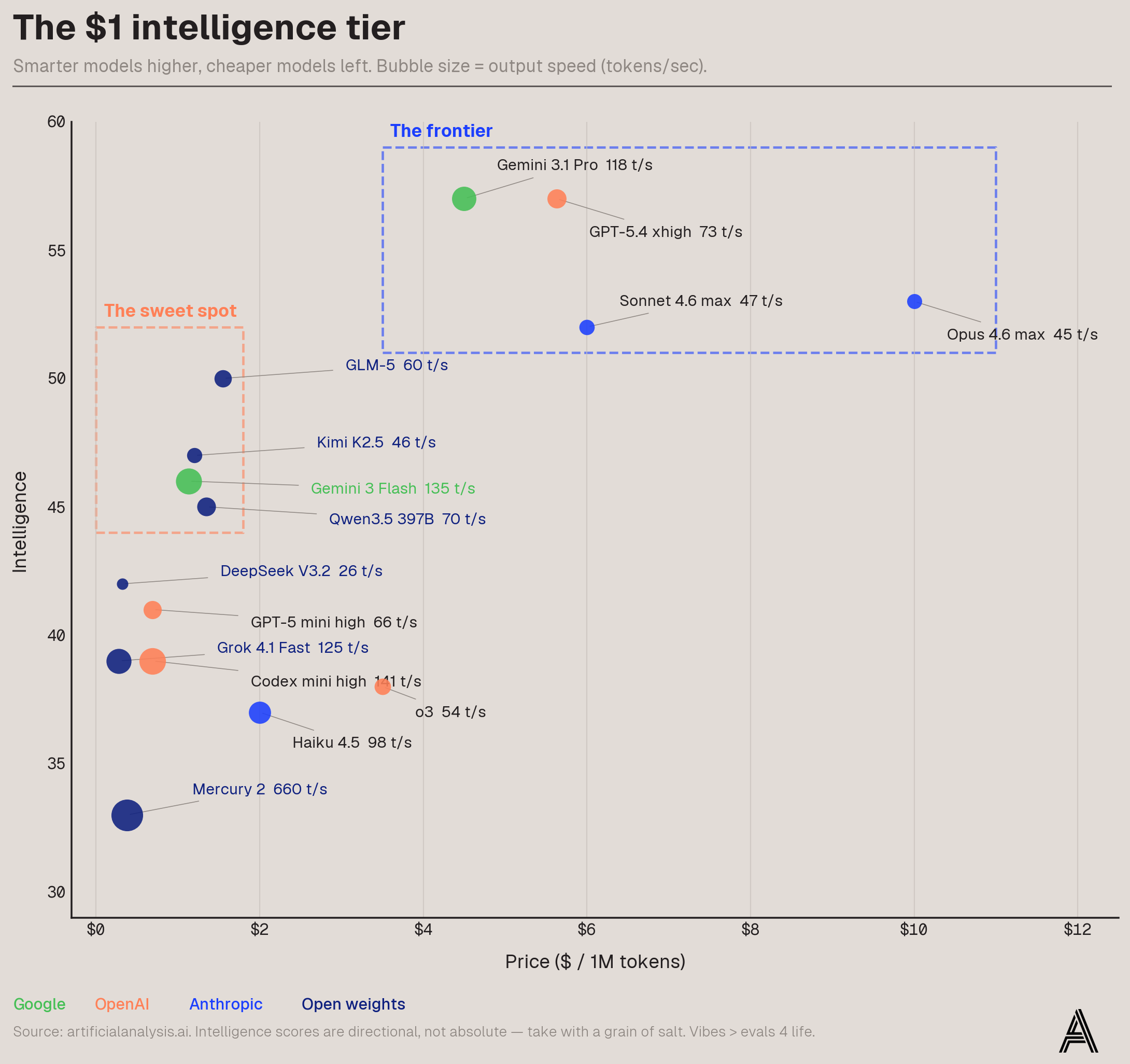

Flash (now up to 3) is unlike anything else in the market from one of the three main players. It's not something I use in my coding harness (yet)—though I am experimenting with using it in harnesses like Pi for sandboxed workloads at scale—but when you have a ton of work to do and you need it done well, it's almost impossible to beat. Artificial Analysis pulls together evals and benchmarks to rank models. It's imperfect, but directionally accurate (see footnote on chart). And the data makes Flash's sweet spot obvious: you get something like 80% of frontier intelligence at 10–25% of the cost. It's the only non-open weight model in what I've labeled "the sweet spot."

Before anyone freaks out about where Opus sits on here and writes all this off: I agree that Opus is clearly smarter than the score they have, but no matter how smart they are they’re still very clearly in the Frontier box and that’s the main point. Also: vibes > evals 4 life.

Why does all this matter? A recent tweet from @chamath reads like a canary in the coalmine.

Since November 2025, our AI costs have more than tripled and we are now spending many millions per year trending to $10M+ per year.

That, in and of itself, feels very scary to me running a small startup.

Mostly because I do not yet see an equivalent uptick in productivity or revenue...while their revenues may be doubling and tripling every month, ours are not so this is starting to eat into margins.

So logically, I am now wondering how much of this is models running in Ralph loops on behalf of an engineer ambivalent to how much it costs. My suspicion is a lot!

I'm a token maximalist (and I believe Chamath is as well), but not at the expense of running a business into the ground. I want everyone using as many tokens as they can because the hands-on experience is amazing, and there's no substitute for it. But the more you use AI, the more you see all the other places you could use it. You start with one problem and then you figure out it could handle this other thing, and that other thing, and all of a sudden you're thinking about how to process every email through an agent harness (this was me on Tuesday).

What was a small number of calls becomes a giant number of calls. That's the exponential on inference, and we're on the precipice of it. You're going to use a ton of tokens from the smartest model and the fastest model and the cheapest model—all of them are going to win—and that's exactly when this math breaks. You're looking at the price of Opus or even Sonnet or even Gemini Pro at that volume and you're like, this is just not going to work anymore.

Which is why the sweet spot matters so much. I think the future has room for three model archetypes: the smartest (frontier), the cheapest (the sweet spot, or what I've been calling the $1 intelligence tier), and the fastest (a topic for another post, but I don't think anyone has wrapped their mind around what changes at 1,500–2,000 tokens per second). Flash lives in the cheapest bucket and borrows from the fastest—135 t/s on Artificial Analysis, nearly 3x Sonnet. And it gets wilder when you think about open weight models in that same tier—Qwen, Kimi, DeepSeek—running on Cerebras or Groq. Token maximalism only survives the exponential if the sweet spot keeps getting faster and cheaper. Right now, Flash is the best bet we've got.