I've seen gluts not followed by shortages, but I've never seen a shortage not followed by a glut. - Nassim Taleb

Every bubble story follows a similar script: Too much money chases too little reality, causing supply to outstrip demand until the market eventually corrects itself. We've seen it in railroads, tulips, dot-com businesses, and now, as many commentators would lead us to believe, in AI.

Alephic Newsletter

Our company-wide newsletter on AI, marketing, and building software.

Subscribe to receive all of our updates directly in your inbox.

The commentators are right in some ways; the AI economy does have a supply problem. But it runs in the opposite direction.

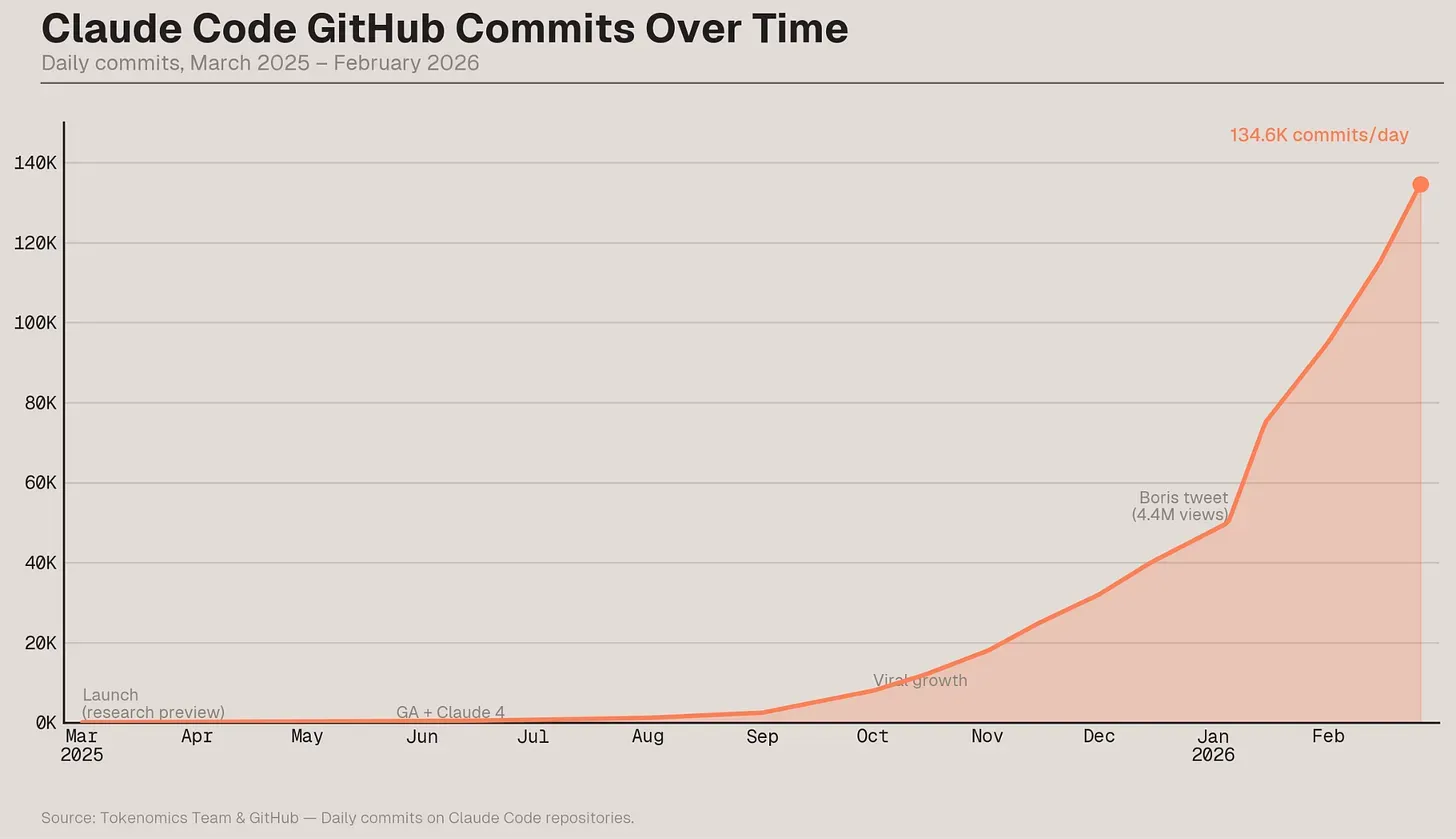

Daily GitHub commits to Claude Code repositories hit 134,800 by February 2026, a proxy for the accelerating integration of AI into software development.

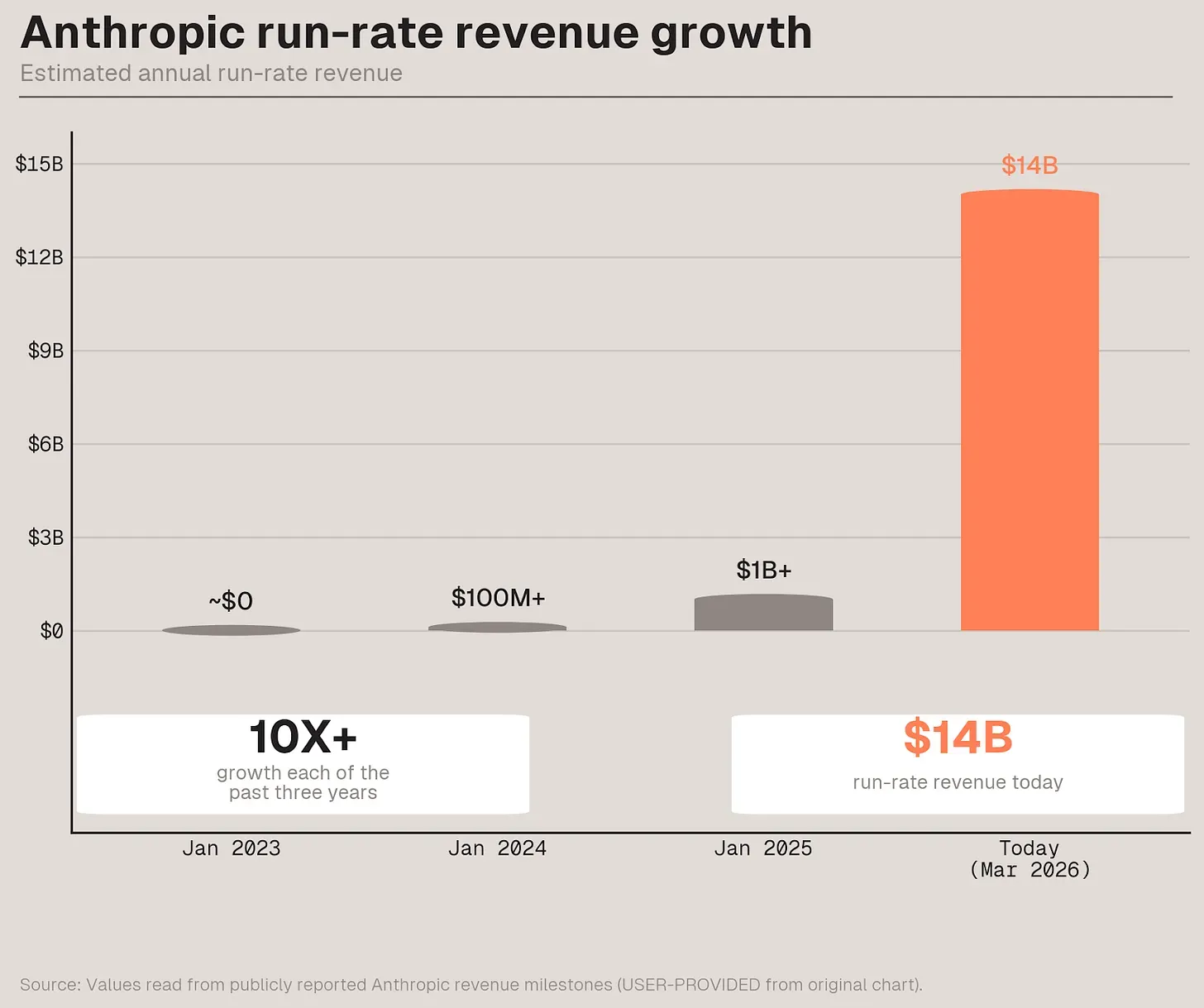

Demand is real—Anthropic's revenue mind-blowingly went from zero to $14 billion in three years—and it's growing faster than the physical world can accommodate it. The semiconductor supply chain, from TSMC's fabs to ASML's mirror-polishing rooms to Samsung's memory lines, cannot build fast enough. Microsoft lost $357 billion in market value, partly because it couldn't secure enough GPUs to serve customers willing to pay.

After digging into the data, I've not only become convinced this isn't a bubble, I actually believe it's an anti-bubble. By that, I mean the foundation models don't have enough compute to meet demand, leaving them to throttle customers in the hope of destroying the insatiable demand we have for intelligence. (If you don't believe this, ask Anthropic why they don't do free trials and wouldn't extend their Claude Max plan to OpenClaw users.)

Anthropic's revenue grew more than 10x each year, reaching a $14 billion annual run rate by early 2026.

The Revenue That Won't Stop

How did we get here? Start with what Dario Amodei told Dwarkesh Patel in February. Anthropic's revenue trajectory is quite literally unprecedented: from zero to $100 million in 2023, $100 million to $1 billion in 2024, $1 billion to $9–10 billion in 2025, and then in January 2026, the company added "another few billion."

"You would think it would slow down," Amodei said, but it hasn't.

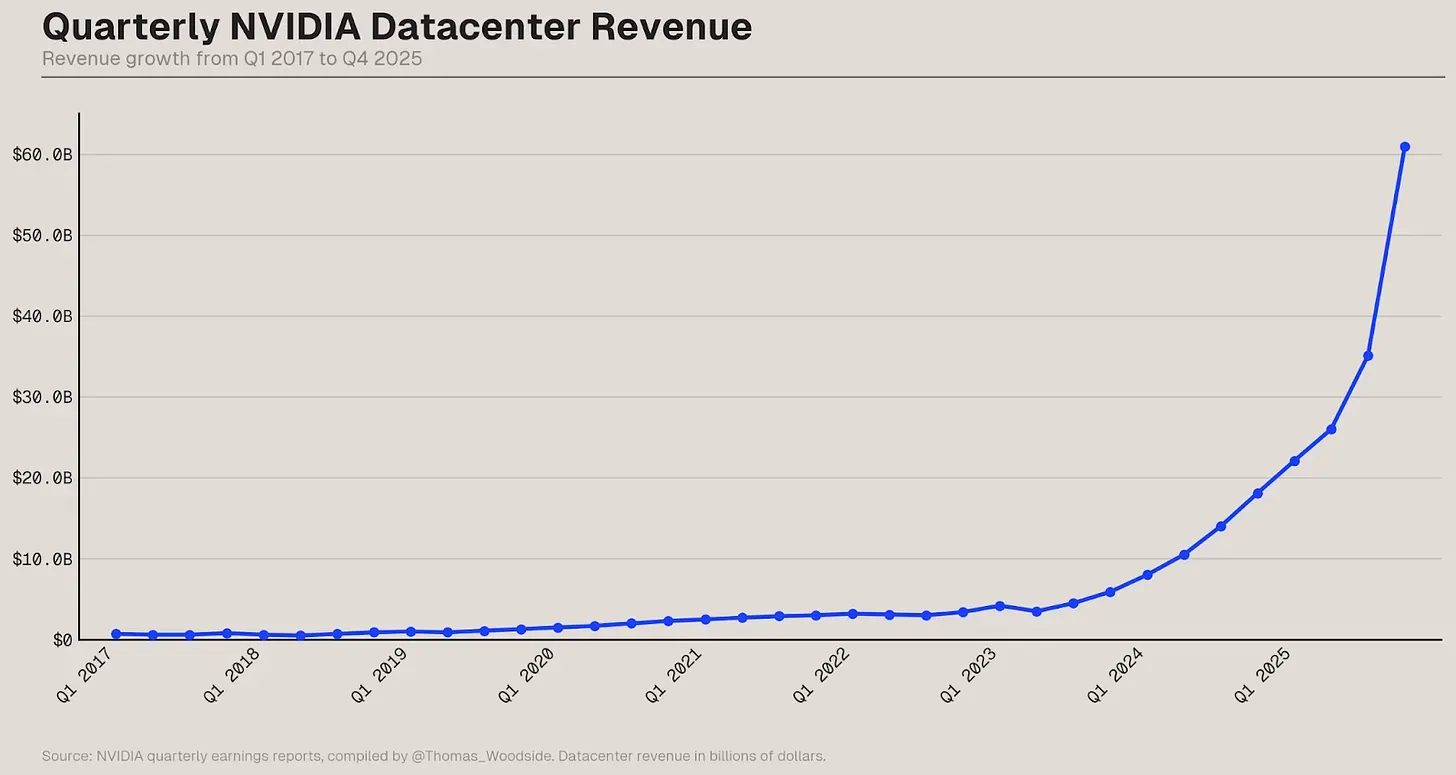

Every hyperscaler's earnings call in late 2025 carried the same refrain: demand exceeded supply. Microsoft's CFO Amy Hood said Azure AI demand "again exceeded supply across workloads, even as we brought more capacity online." Google's CFO described "a tight demand-supply environment." Amazon's CEO: "As fast as we're adding capacity right now, we're monetizing it."

In a bubble, companies build ahead of demand and pray customers materialize. The idea of Pets.com wasn't a bad one; it just came 15 years too early, before enough people were on the internet to support its valuation. With AI, customers are lined up, but the factories haven't been built yet.

NVIDIA's quarterly datacenter revenue shows the staggering and accelerating scale of AI infrastructure spending post-2023.

The AGI-Pilled Gradient

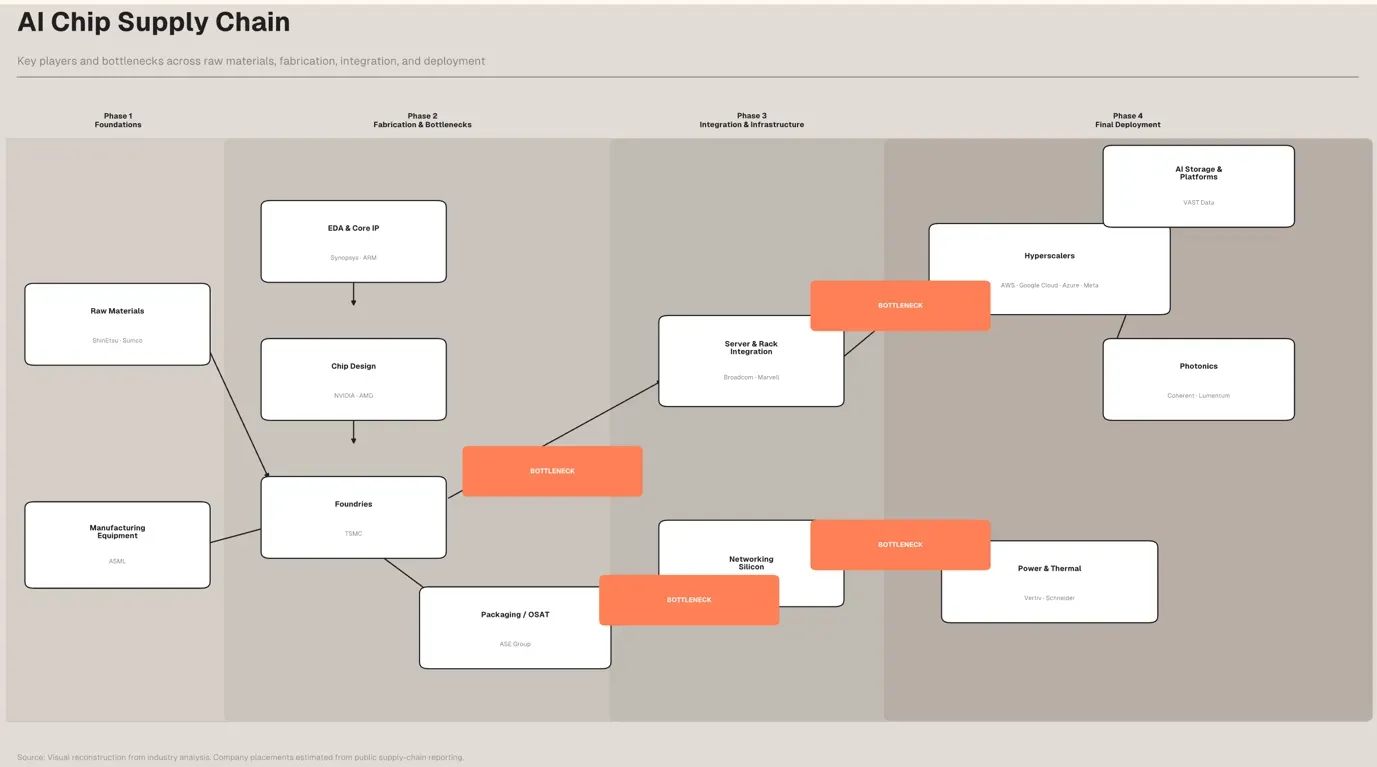

Dylan Patel, the founder of SemiAnalysis, the leading voice on all things chips, has the clearest model for why the supply chain keeps getting it wrong. He calls it the AGI-pilledness gradient.

The companies closest to the models—OpenAI, Anthropic, Google—see the demand most clearly. They know what the next generation of models will be capable of and how much compute those models will consume. Nvidia, one step removed, is slightly less aggressive. TSMC, another step down, is even more conservative. And ASML, which builds the machines that make the machines that make the chips, plans for a world that looks like a modest extrapolation of the past.

"OpenAI and Anthropic, they know they need X [chips]," Patel told Dwarkesh. "Nvidia is not quite as AGI-pilled, and they're building X [chips] minus one, and you go down the supply chain, everyone's doing minus one. And in some cases, they're doing divided by two."

The result is a supply chain in which each link underbuilds, and therefore underserves, the one above it.

"Constantly, we're told our numbers are way too high," Patel said. "And then when they're right, they're like, oh, yeah, yeah, but your next year's numbers are still too high."

AI infrastructure starts with silicon and ends with hyperscale. In between: four phases of assembly, each constrained by a different bottleneck.

The Physics of Falling Behind

The gap between AI demand and semiconductor supply isn't stable. It's widening. Ben Thompson put it plainly in Stratechery: "TSMC is the de facto brake on the AI buildout."

TSMC's CapEx was effectively flat through 2023 and 2024—the exact years when AI demand was exploding post-ChatGPT. The company has since ramped: $41 billion in 2025, with $52–56 billion planned for 2026. But TSMC's own CEO, C.C. Wei, admitted the contribution of that spending to 2026 capacity is "almost none," and to 2027 capacity "only a little." New fabs take 2 to 3 years to build, so meaningful additional new capacity won't arrive until 2028 or 2029.

Thompson's observation is the damning one: TSMC's CapEx growth rate is lower than hyperscaler CapEx growth. TSMC's customers are telling the company as much. Wei reported that customers told him "silicon from TSMC is a bottleneck" and asked him "not to pay attention to all others, because they have to solve the silicon bottleneck first."

And then there's ASML. Advanced Semiconductor Materials Lithography (ASML) is the sole manufacturer of extreme ultraviolet lithography machines—the tools required to print transistors at 5 nanometers and below. Each one costs $300–400 million, ships in 40 freight containers, and contains over 100,000 parts. The mirrors, made by Carl Zeiss, must be "so smooth that if expanded to the size of Germany they would not have a bump higher than a millimeter."

ASML's EXE:5000 — the most complex machine ever built, and the backbone of next-generation chipmaking. Each unit costs roughly $380 million and takes multiple Boeing 747s to ship.

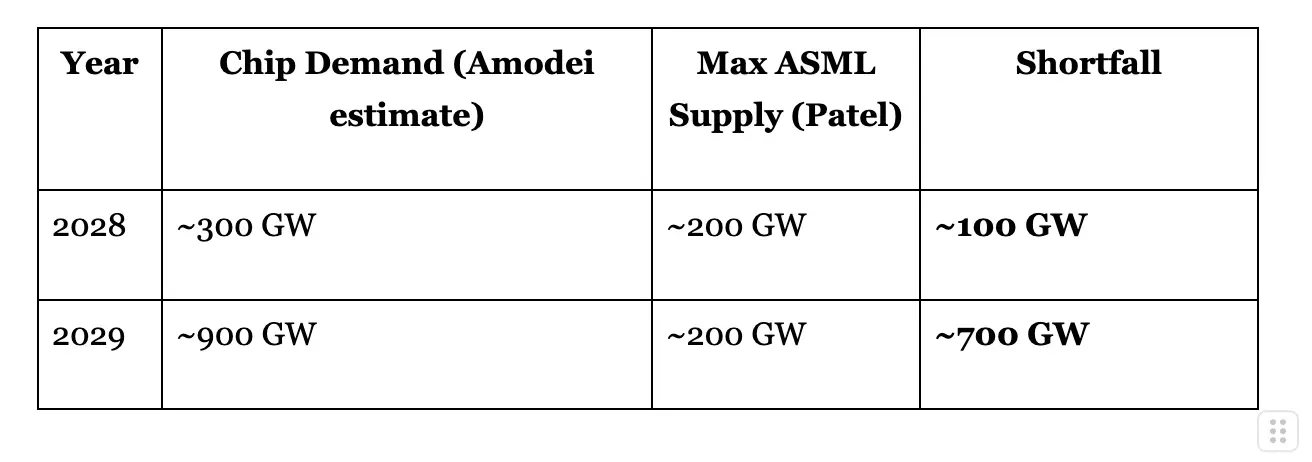

ASML currently produces about 70 EUV tools per year. By the end of the decade, under aggressive assumptions, that number reaches slightly over 100. Patel calculates that each gigawatt of AI compute requires roughly 3.5 EUV tools. That means even with every EUV machine ever made still running, the theoretical maximum is about 200 gigawatts of AI chip capacity by 2030.

Meanwhile, Amodei estimates the industry is building 10–15 gigawatts this year, scaling at roughly 3x annually. That trajectory hits 100 gigawatts in 2028 and 300 gigawatts in 2029. Here is how the supply from ASML looks against the forecasted demand from Amodei.

The exponential demand curve slams into the linear supply ceiling somewhere around 2028. "No one really sees demand for 200 gigawatts a year of AI chips or trillions of dollars of spend a year in the semiconductor supply chain," Patel said. "They're just not AGI-pilled."

And the bottleneck within the bottleneck? Carl Zeiss SMT, the division of the privately held Carl Zeiss AG that makes the optics without which no EUV machine works. ZEISS is owned by a German foundation, not publicly traded, and its semiconductor optics division alone does over ~$5 billion a year in revenue. ASML has taken a 24.9% stake in it. The entire AI compute buildout, with trillions of dollars in projected demand, funnels through a single division of a foundation-owned optics company that no public market investor can touch directly.

Klumpenrisiko. An actual German financial term meaning "concentration risk" or "cluster risk." It's a real word used in risk management, and it sounds exactly as alarming as the situation warrants.

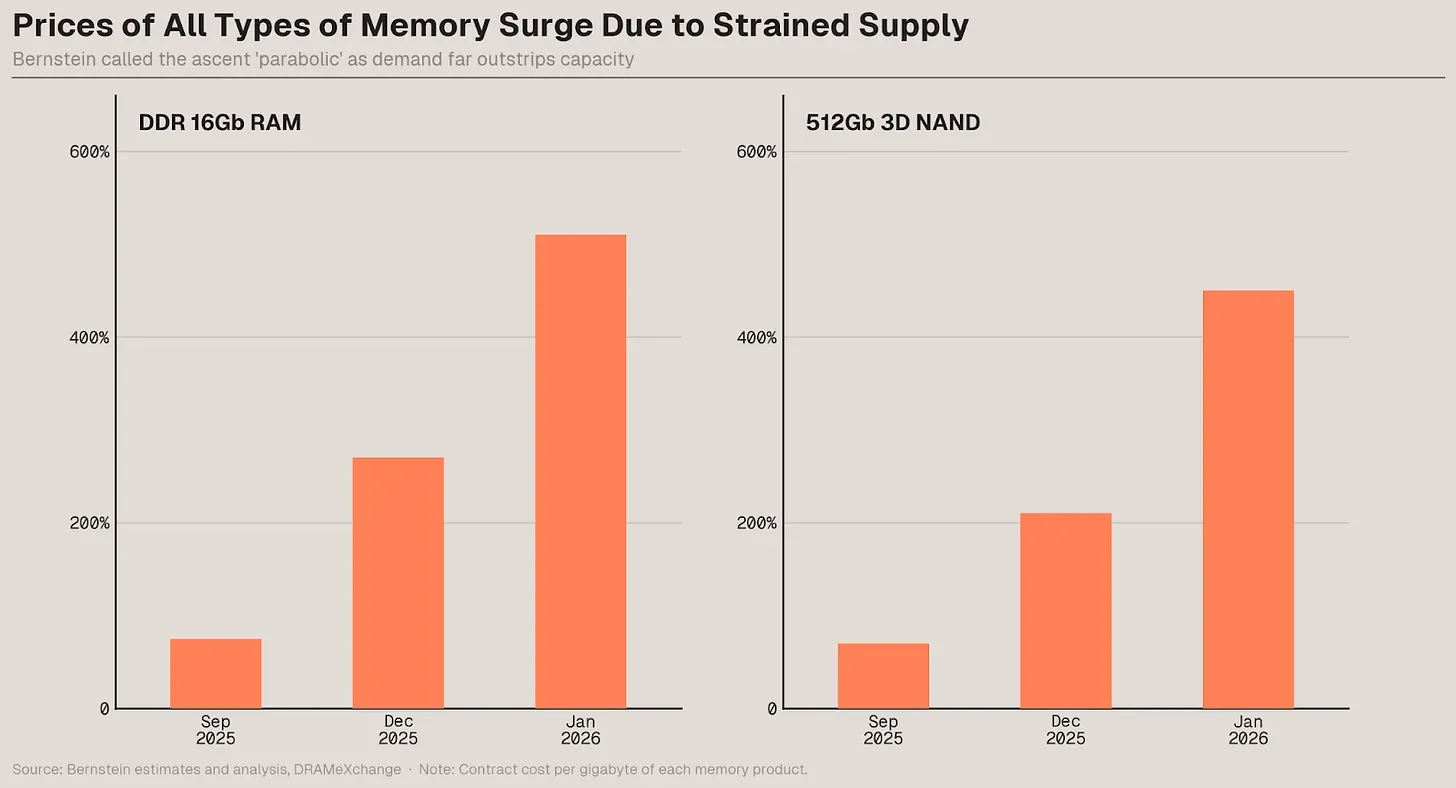

Memory prices have roughly tripled as data centers consume supply that once served consumer electronics.

Collateral Damage

The anti-bubble doesn't just leave money on the table for hyperscalers; it can destroy adjacent industries.

Thirty percent of the Big Four's $600 billion in combined CapEx this year is going to memory. High-bandwidth memory—HBM—delivers 20x the bandwidth of standard DRAM but uses three to four times more wafer area per bit. Memory vendors, who were losing money in 2023, haven't built new fabs in three to four years. As with TSMC, new capacity takes multiple years to come online.

The consequence: memory prices have roughly tripled. And that cost hits everything with a chip, cascading far beyond GPUs and the AI market. Patel projects smartphone volumes dropping from 1.1 billion units to 500–600 million as memory costs add $100–150 to each phone's bill of materials. Xiaomi and Oppo are already cutting low- and mid-range volumes in half. PC gaming forums are full of memes about AI killing RAM prices.

This is demand destruction, but not the kind bubble theorists imagined. In our current scenario, we're already seeing clear evidence that this anti-bubble will destroy demand for other products by consuming their supply inputs, making them both more expensive and scarcer. Unlike AI, it's not hard to forecast smartphone demand: we have almost twenty years of data to work with. It's also easy to see the pattern in prices and performance—what you can get in an entry-level phone today far surpasses the best phone from five years ago. But all this can and will start to change as more and more of the supply pipeline for everything in our lives gets rerouted to AI because of the underbuilding down the stack. Consumer electronics will be collateral damage in an infrastructure war they didn't start.

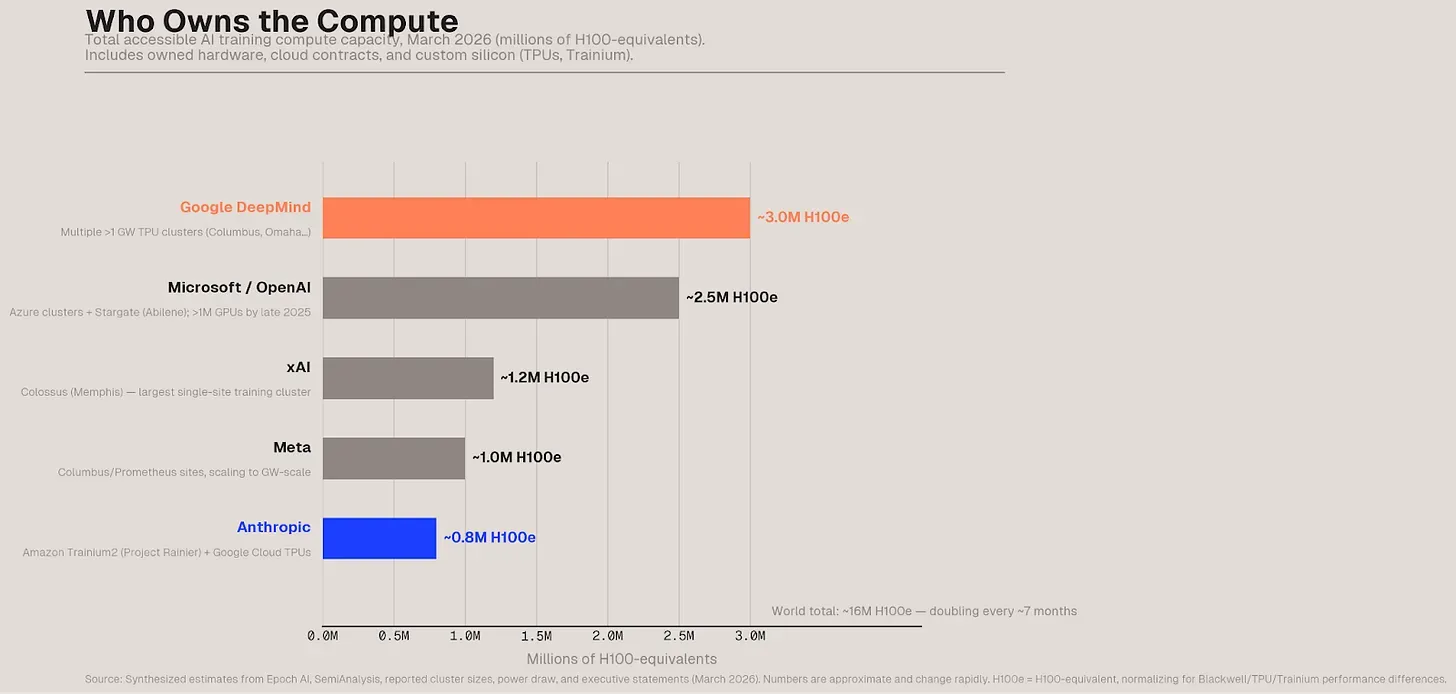

Anthropic has a clear lead in the enterprise but did it buy enough compute? Will it have to destroy demand and/or give it to OpenAI + Google?

The Demand Prediction Trap

Amodei is candid about the bind this creates for the companies closest to the demand signal.

"I could assume that the revenue will continue growing 10x a year," he told Dwarkesh. "I could buy $1 trillion of compute that starts at the end of 2027. If my revenue is not $1 trillion, if it's even $800 billion, there's no force on earth, there's no hedge on earth that could stop me from going bankrupt."

This is the demand prediction trap. Anthropic's revenue has grown 10x a year for three consecutive years. There is no sign that demand for Anthropic's models will slow down any time soon. But no company can bet its existence on unprecedented revenue extrapolation, because being wrong by one year—or having 5x growth instead of 10x—when you're working in numbers with eleven zeroes means insolvency.

So Anthropic calibrates to "capture pretty strong upside worlds" without existential risk. OpenAI signed more aggressive five-year deals and now has more compute locked up at better prices. Despite that, neither has enough capacity for AI-hungry businesses and consumers who discover new ways to use the technology every day.

The bind extends down the chain. TSMC won't build more fabs because if AI demand misses by a year, depreciation and the loss of margin eats them alive. ASML won't double EUV production because if orders don't come in, they've overbuilt the most expensive machines on Earth. Each company manages its own risk rationally. In aggregate, the industry undersupplies.

This is the structural mechanism of the anti-bubble. In a normal bubble, irrational exuberance leads to overbuilding, and the correction is a crash. Here, rational caution leads to underbuilding, and the correction is... more shortages and, almost certainly, an inflationary environment in many categories, like consumer electronics, where low prices have become the norm. The feedback loop runs backward. Every company that hedges against overinvestment makes the supply deficit worse, validating the demand signal. This makes the next round of forecasts look conservative again all the while making your next iPhone way more expensive.

Tesla's Terabab kicks off March 21st, 2026. What year will it alleviate the bottleneck?

What the Anti-Bubble Means

Amodei projects the AI industry reaching "low hundreds of billions" in revenue by 2028, then trillions before 2030. His math: if each gigawatt of compute costs $10–15 billion a year, and the industry reaches 100–300 gigawatts by 2028–2029, the numbers work.

Whether those numbers land depends on whether the supply chain can build fast enough. Right now, it can't. Thompson's prescription—that hyperscalers must build up Samsung or Intel as viable competitors to TSMC—is less a strategic recommendation than an observation about what happens if they don't. "If hyperscalers and chip companies don't build up a TSMC competitor," he writes, "they are set to forego billions of dollars in revenue and stunt the AI revolution."

GPU rental prices show there is little sign of depreciation

An H100 GPU is worth more today than when it shipped three years ago—because the models it can run now generate more valuable output. That fact alone should give the bubble theorists pause. In a bubble, assets depreciate as reality catches up to hype. In the AI compute market, assets appreciate as demand outruns supply.

The conventional wisdom says the question is whether AI can justify the investment. The data says the investment can't keep up with the AI. We aren't in a bubble. We are in the opposite of one—a world where the real risk isn't that we've built too much, but that we can't build fast enough to capture the demand that is already here.