Context Engineering

Model choice still gets too much of the conversation because it is easy to talk about. Context engineering is harder, but it is where enterprise performance actually comes from. The system gets better when it can think with your records, your examples, your rules, your exceptions, and the strange details that generic software always rounds off.

That is why context engineering matters more than prompt cleverness. Prompts still matter inside a shared prompt. But the harder question is what the system knows how to see. Context changes that. It is the layer where documentation, private tokens, and workflow memory become runtime behavior.

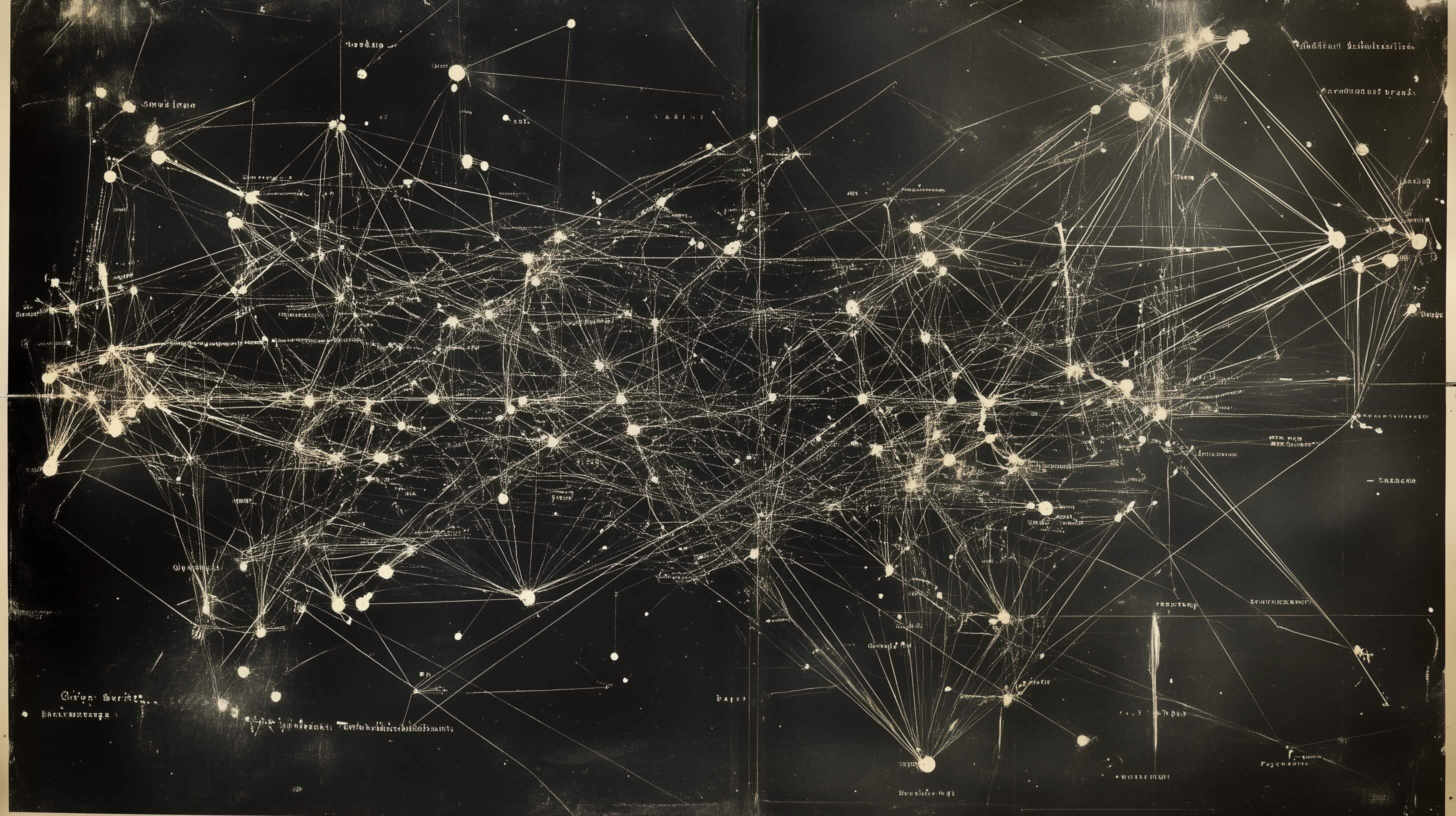

Canonical artifact

What it shows

the context surface

Topology of subscription dependencies

The software surface is already dense. Context engineering decides whether your system can navigate that mess with your records, your rules, and your exceptions intact.

Without a context layer, all this complexity gets flattened back into generic software behavior.

Reviews and signals processed when the context layer is allowed to do real work instead of staying in a note somewhere.

The number of dimensions EY taught its system to evaluate using firm-specific judgment.

Competitor and content assets put into motion when context becomes a strategic asset instead of a forgotten archive.

Beyond Prompting

Prompt engineering had its moment because it was the first thing people could touch. But as soon as the work becomes durable, prompting alone stops being enough. Enterprises do not win because somebody found a slightly better paragraph opener. They win because the system sees the right records, examples, histories, and constraints every time the problem appears.

That is the practical difference between prompt engineering and context engineering. Prompting is an instruction. Context is the environment. If the environment is wrong, no instruction will rescue you for long.

Which is also why the token maximalist instinct matters here. Always err on the side of too much context, not too little. The model is much more often starved than overwhelmed, and the bigger opportunity is usually in its ability to read, classify, and reason over your material, not just produce one more piece of generic text.

Even the word helps. Context once meant things woven together, not just nearby text. That is the real job here: knit records, examples, rules, and exceptions closely enough that they throw light on the decision the system is making.

Context engineering is the art and science of filling context windows with just the right information for agents to take the next action.

Private Tokens

Alephic's language for this is private tokens: the way your company names things, the way it reasons, the exceptions it trusts, the stories it repeats, and the documents that quietly run the place. Generic software tends to treat that material as residue. Context engineering treats it as the fuel.

In practice that usually means four buckets: conversations, internal documentation, digital communications, and tribal wisdom. None of that looks dramatic on a systems diagram. It is still where the edge lives.

This is also where No SaaS and context engineering connect. The minute software touches judgment, the question is no longer whether the tool works in the abstract. The question is whether what makes your company yours lives inside the system or outside it.

It is also why systems built for you get stronger over time. Documentation becomes training data. Feedback becomes improvement. The system starts picking up your digital DNA instead of averaging you back toward the median.

Code, AI, and expertise create the possibility. Private tokens are what make the behavior specific.

What It Looks Like

Good context engineering does not begin with a vector database and hope. It begins by deciding what should count as context in the first place. Documentation. Examples. Rules. Operator notes. Exceptions. Feedback after the system ships. The workflow itself.

That is also why so much corporate documentation underperforms in AI systems. A 500-word brand page is fine for a human who already knows the company. It is weak fuel for a machine. The useful version is usually much denser: thousands of words, dozens or hundreds of examples, and enough structure that the system can learn what good actually looks like.

One useful internal frame comes from the documentation guide: some material answers, some instructs, some orients, and some teaches. Good context systems need to know the difference. An FAQ is not a tutorial. An org chart is not an exemplar. An onboarding deck is not a decision rule.

Good context has a dual audience: it still helps humans, but it also exposes enough structure for a system to use.

- 1

Capture the material

Start by documenting what already exists so tacit knowledge stops disappearing into meetings and side channels.

- 2

Find the private tokens

Separate generic background from the records and examples that actually move judgment inside your company.

- 3

Encode the context

Structure those materials with examples, decision criteria, and metadata so the system can retrieve, reason, and update against them in live use.

- 4

Let the context compound

Keep the loop open after launch so new exceptions, corrections, and examples keep changing the behavior.

Proof in the Work

Context at Amazon scale

Amazon encoded brand voice, product context, and creative judgment into a system that lets copy scale without flattening into generic language.

Private tokens as system behavior

EY turned a 25-point rubric and a huge document corpus into a scoring engine that behaves like the firm, not like the average internet user.

Context that keeps compounding

Lands' End used catalog, merchandising, and operator feedback as live context so the system could make better layout, image, and copy choices.

Related Reading

Documentation as a Strategic Asset

The clearest internal source on why documentation stops being admin work and starts becoming leverage.

EssayStrategic Software

The underlying Alephic idea: when strategy becomes software, your context is no longer background information. It is part of the system.

EssayThings I Think I Think About AI

The page where Noah makes the prompt-engineering versus token-maximalist turn explicit.

GlossaryPrivate Tokens

The glossary entry for the company-specific knowledge, norms, and judgment patterns generic software can never fully own for you.

EssaySoftware Built For You

Why systems built for one company get stronger over time: documentation becomes training data, feedback becomes improvement, and private tokens stay private.

EssayDon’t Let SaaS Train on Your Private Tokens

The sharpest articulation of why company-specific language is digital DNA and why generic software should not get to train on it.

Pillar PageNo SaaS

Why this matters economically: the moment software touches your edge, the context has to live with you instead of inside a vendor schema.

Models are table stakes

The edge comes from the context layer: the part of the system that knows your history, your language, and what the workflow keeps teaching it.

Further Reading

Effective context engineering for AI agents ↗

Anthropic on effective context engineering for agents and why performance depends on what enters the system, not just the model.

EssayThe rise of context engineering ↗

LangChain on the rise of context engineering as a category distinct from prompt engineering.

GuideContext Engineering Guide ↗

A practical guide for the emerging category and the kinds of context strategies product teams are actually using.

EssayContext Engineering: 5 Familiar Strategies from Real Product Teams ↗

A product-team view of context engineering that helps explain why this is not just a prompting problem.

Need systems that think with your actual context?

We turn documentation, examples, and workflow memory into context layers so enterprise AI systems stop sounding generic and start behaving like your company.